Experiments with AI Generated Media

During a course with MIT Media labs

held by Fluid interface Group,

we experimented with different GAN models

for generating image, video, and audio deepfakes

Motivation (Why)

Enrolled in the MIT Media Lab course on Experiments with AI-Generated Media with enthusiasm to learn about advances in the AI world and to know more about the latest on Deepfake for Good.

During the 5 weeks of this awesomeness met many bright, talented, and creative minds and received an assurance that there are people who love to think about anti-disciplinary topics of similar interests as mine.

Description and Reflection

We dabbled into many different concepts of AI, deep-learning, GAN models, and deepfake of media. The overall goal was to have a healthy discussion about the advent of deepfake media and how to utilize it for doing good for the community.

We were divided into learning groups to work closely with others based on geo locations. Here we had 3 facet discussions about Computation, Creativity, and Society; the range of discussion was of apex quality, this, in particular, was very interesting for me.

Here, I would be describing very briefly the topics we went through and the results that I generated during my learning.

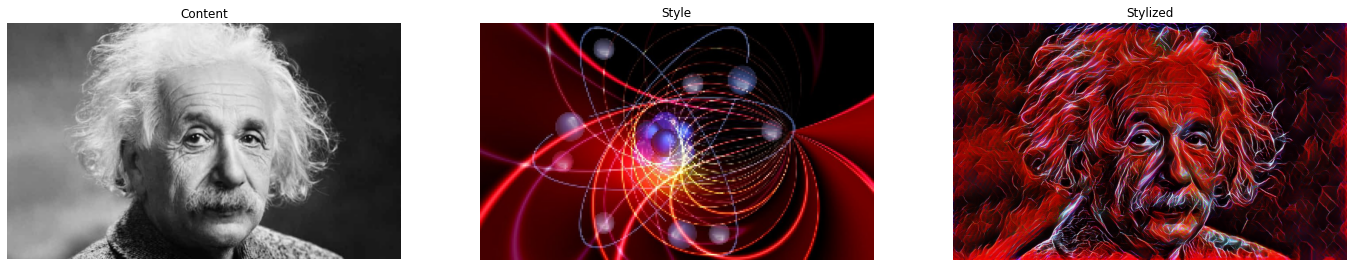

Style Transfer

This model piqued my interest in the potential of GAN in the art world. The Style Transfer model uses the style of one picture and tries to replicate similar features to the target picture. This in itself is so unique that now anyone with even a little spark of imagination can ignite result outputs that are of very high standards.

I did a lot of experiments with many different pictures and styles, transferring Lovecraftian Style to Rainbow Doughnuts was a lot of fun. I further imagined how to make this style transfer more symbolic, so I transferred a picture with Atom's Style to Albert Einstein sort of trying to merge physics with the physicist.

Also experimented with Season Change by using the style transfer model.

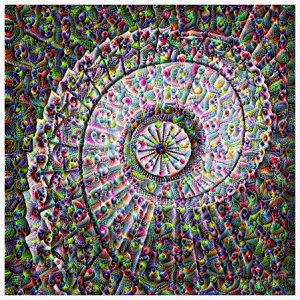

Deep Dream

The trippy algorithm, Deep dream uses an activation-atlas, this concept was very intriguing, made me think that what if we similarly save the Genome data and then access that using an AI to get various combinations and see if we are able to artificially create similar features to that of a real person. This would be something that I would love to research more on. But for now, I created a trippy staircase leading deep within.

Neural Doodle

This particular model sparked my imagination and I thought what if I try to use this to do a face swap ? Tried out this will some of my favorite 'One Piece' anime characters Zoro and Luffy.

The model did take its sweet time (52 minutes!) to give this output result, which made me think about one of the limitations of AI, the immense processing power needed. Hope we soon see a day when we would be able to do these kinds of tweaking in seconds facilitating real-time Neural Doodling.

Another interesting observation was that the matplot function created a rainbow-like effect while plotting the new image based on the provided masks. This is a powerful tool that can help build scenes based on simple masks like images and then later filled in by an AI to complete the scene!

Big GAN

BigGAN is trained on ImageNet and helps get a better sense of the Fidelity-Diversity trade-off. Fidelity refers to image quality, whereas Diversity means its ability to create a variety of images. We are able to choose different categories, control the number of images generated, truncation, and noise seed to compare the various result. Noticed that the generation of faces and texts that are far away was not very clear. Guessing it's difficult or needs more training to generate smooth and detailed pictures.

I also experimented with Interpolation of these images, converting one image to another : For example, I ordered a Chicken Burrito from the AI Kitchen (Chicken picture to be converted to Burrito picture) The result was hilarious. The AI jumped from different steps to reach the generation of Burrito image. I wonder what Gordon Ramsay has to say about this?

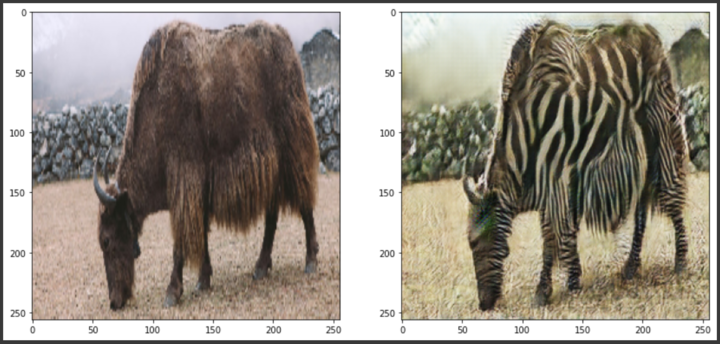

Cycle GAN

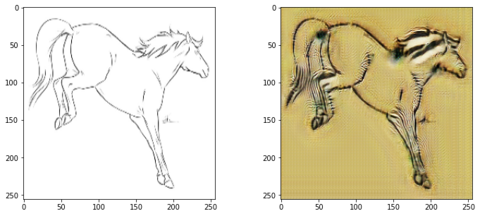

CycleGAN trains and learns the feature of a particular subject and then can use that on any other image, cyclically going from domain A to B and B to A. Here we used the horse 2 zebra converter model. But normally, I didn't use the simple conversion, rater converted a Yak to Zebryak and tweaked a bit into the fashion world.

Noticed, that this model understands specific colors and then creates zebra-like stripes on them, as during my experiment with a horse outline, it didn't recognize the shape.

Progressive Growing GAN

ProgGAN is a more human face-specific generation, here again, we learned about the Fidelity-Diversity trade-off. When you increase the Noise, clearly we get more diversity and less fidelity. Here is a compiled GIF of all the different faces that were created while interpolating between two AI-generated female faces.

ProgGAN is a more human face-specific generation, here again, we learned about the Fidelity-Diversity trade-off. When you increase the Noise, clearly we get more diversity and less fidelity. Here is a compiled GIF of all the different faces that were created while interpolating between two AI-generated female faces.

NVidia Imaginaire

NVidia has released generative algorithms with pre-trained models for deepfake creation and beyond. This was super fun to work on! I was not able to tweak the Face Re-enactment trained model so I used photoshop to change the face in the original image, used the very dynamic Trevor Noah's photograph, and faked face movements from another video. The results were good but were not accurate. I considered this as an entry point to video deepfakes that we were about to dive in next.

First Order Motion Model

Here we created deepfakes based on work by Aliaksandr Siarohin by applying the motion from a video to an image. It kept me fascinated and I have described more about this on another project page Laughing Old Man.

The concept behind this is called Transfer Learning. Transfer learning and domain adaptation refer to the situation where what has been learned in one setting is exploited to improve generalization in another setting. Meaning, we can extract facial expressions from a video and apply them to photographs. Can make a body (in an image) move by capturing the motion of another similar body (in a video).

Below are the results that I produced, realized that this was an extremely powerful method that can be used by animators to be prolific.

Here, we have Captain Levi from the anime 'Attack on the Titans' being out of character. And a very Buzzed Lightyear, mimicking the motion of a stickman figure.

Audio Deepfakes

Lastly moving to Audio deepfakes, here we experimented with the Voice cloning model to create a synthetic voice. I tested the lack of diversity and made a voice clone speaking in Hindi the AI clearly had an English Accent suggesting that the training data is not compatible in adapting to diverse languages.

This gave me an idea of having an interface AI like a DirectorGAN that will first understand which accent and language needs to be used to synthesize the deepfake voice. Here, I made the 'Angrez' AI speak in Hindi and say 'hey you, why are you looking at me like that? don't you have manners?'

Also, using the Mellotron model I created my very own Batman Voice cautioning to 'Be careful what you say on record, you never know how it will be used'. Further, made the AI sing a famous Linkin Park song 'Numb'

Future Applications

Generative AI is an immensely powerful tool and can have multi-facet benefits. Let me divide this into multiple realms, most of which may be completely fictional. I am going to wander way off the boundaries of media.

Scientific & Technological Realm

Generated chip architecture – Using the various currently available chips and their performance parameters, an AI can generate architectures that will maintain the performance while optimizing power consumption

What if gene – Won’t it be fascinating to know which other species could have survived or might have been on this earth, maybe there were humans with blue skin and orange eyes, or maybe get dodo back to this planet? While trying to find these mysteries we might observe something very peculiar that may not be humanly possible to discover.

The realm of Social & Emotional Wellbeing

Deep Help – Mostly targeted to the autism spectrum, people with dyslexia, and similar learning disabilities, this AI will try to generate sounds and visuals to help soothe them as well as assist them to adapt to the current structure and system around them. This can also be used for comforting and offering to counsel to anxious people and people with self-harming tendencies.

MemoirAI – To help people with PTSD and loss of death, this AI will use the past data and figuratively transport them to a safe place deep in nostalgia, with a person who they had always relied upon but is not around anymore.

Deep Culture – Embracing different cultures and languages, create a spectrum instead of trying to create a singular parameter. This AI will also help in effective education and spreading the understanding of diversity and the existence of variety (this is of utmost importance!)

Creative, Imaginative & Conceptual Realm

Deep Consciousness – Can make AI aware of what it is doing? Aware of its latent space? What is it trying to achieve and perhaps why is it trying to achieve that? This is my long innate desire that we could invoke the same thoughts into an AI. Maybe we will in that journey, understand why we choose what we choose, why do we fearsome things but love others. We may discover the key to transferring consciousness to a distant artificial generated body, teleporting in a way, and living (being conscious) endlessly.

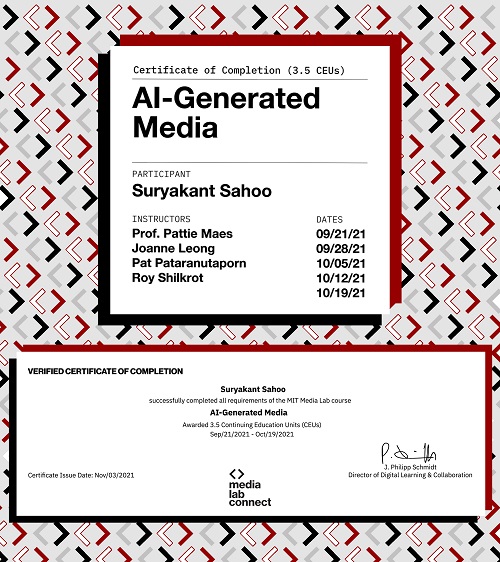

Certificate

Tools & Technology

Creators

Suryakant Sahoo